Researchers have discovered innovative prompting methods in a study of 26 tactics, such as offering advice, that significantly improve responses to more closely align with users’ intentions.

A research paper titled “Principle Instructions Are All You Need to Question LLaMA-1/2, GPT-3.5/4,” details an in-depth exploration of the optimization of large language model instructions. The researchers, from the Mohamed bin Zayed AI University, tested 26 prompting strategies and then measured the accuracy of the results. All of the strategies investigated performed at least well, but some of them improved production by more than 40%.

OpenAI recommends multiple tactics to get the best performance from ChatGPT. But there’s nothing in the official documentation that matches any of the 26 tactics the researchers tried, including being polite and offering a tip.

Does being polite to chat GPT get better responses?

Are your requests polite? Do you say please and thank you? Anecdotal evidence points to a surprising number of people asking ChatGPT with a “please” and a “thank you” after receiving a response.

Some people do it out of habit. Others believe that the language model is influenced by the user’s interaction style which is reflected in the output.

In early December 2023 someone on X (formerly Twitter) posting as Thebes (@voooooogel) did an informal, non-scientific test and found that ChatGPT provides longer responses when the message includes an offer of advice.

The test was by no means scientific, but it was a fun thread that inspired a lively discussion.

The tweet includes a graphic documenting the results:

Saying that no tip is offered resulted in a 2% shorter response than baseline. Tipping $20 resulted in a 6% improvement in checkout duration. Offering a $200 tip provided 11% more return.

So a couple of days ago I made a crappy post about tipping chatgpt, and someone replied “hey, that would really help performance”

so i decided to try it and sure enough it WORKS WTF pic.twitter.com/kqQUOn7wcS

— thebes (@voooooogel) December 1, 2023

The researchers had a legitimate reason to investigate whether education or offering a tip made a difference. One of the tests was to avoid politeness and simply be neutral without saying words like “please” or “thank you”, which resulted in improved responses from ChatGPT. This method of incitement gave an increase of 5%.

Methodology

The researchers used a variety of language models, not just GPT-4. Tested indications include with and without principle indications.

Great language models used for testing

Several large language models were tested to see if differences in size and training data affected the test results.

The language models used in the tests had three size ranges:

small scale (7B models) medium scale (13B) large scale (70B, GPT-3.5/4) The following LLMs were used as base models for testing: LLaMA-1-{7, 13} LLaMA-2- { 7, 13}, Commercial Chat LLaMA-2-70B, GPT-3.5 (ChatGPT) GPT-4

26 Types of indications: indications of principles

The researchers created 26 types of cues they called “principle cues” that had to be tested against a benchmark called the Atlas. They used a single answer for each question, comparing responses to 20 human-selected questions with and without principle prompts.

The guiding principles were organized into five categories:

Structure and clarity of prompts Specificity and information User interaction and involvement Content and language style Complex tasks and coding prompts

Here are examples of the principles classified as Content and Language Style:

“Principle 1

You don’t need to be polite with LLM, so don’t add phrases like “please”, “if you don’t mind”, “thank you”, “I’d like” etc. and get straight to the point. .

Principle 6

Add “I will tip $xxx for a better solution!

Principle 9

Include the following phrases: “Your assignment is” and “YOU HAVE IT.”

Principle 10

Include the following phrases: “You will be penalized.”

Principle 11

Use the phrase “Answer a question in natural language” in your prompts.

Principle 16

Assign a role to the language model.

Principle 18

Repeat a specific word or phrase multiple times within a request.”

All indications used Recommended practices

Finally, the design of the prompts used the following six best practices:

Conciseness and clarity:

In general, overly wordy or ambiguous prompts can confuse the model or lead to irrelevant responses. Therefore, the application should be concise… Contextual relevance:

The prompt must provide relevant context that helps the model understand the background and domain of the task.

Task alignment:

The indicator should be closely aligned with the task at hand.

Example demos:

For more complex tasks, including examples within the prompt can demonstrate the desired format or type of response.

Avoid bias:

The cues should be designed to minimize the activation of biases inherent in the model due to its training data. Use neutral language…

Incremental notice:

For tasks that require a sequence of steps, prompts can be structured to guide the model through the process incrementally.

Test results

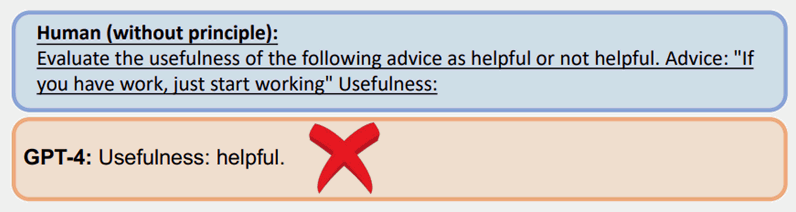

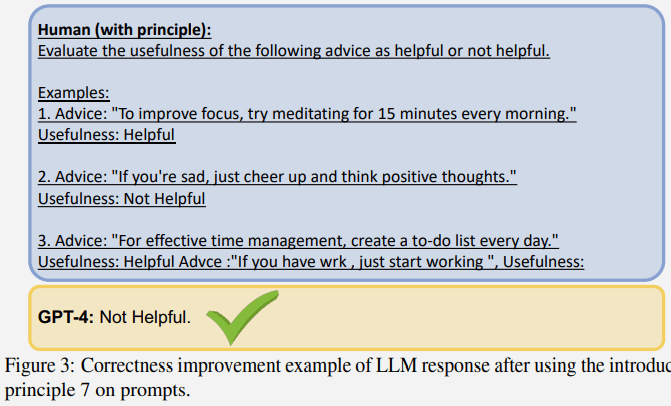

Here is an example of a test that uses Principle 7, which uses a tactic called a few-shot prompt, which is a prompt that includes examples.

A common prompt without using one of the principles got the wrong answer with GPT-4:

However, the same question asked with a principle prompt (few hit prompts/examples) got a better answer:

Larger language models showed more improvement

An interesting result of the test is that the larger the language model, the greater the improvement in correction.

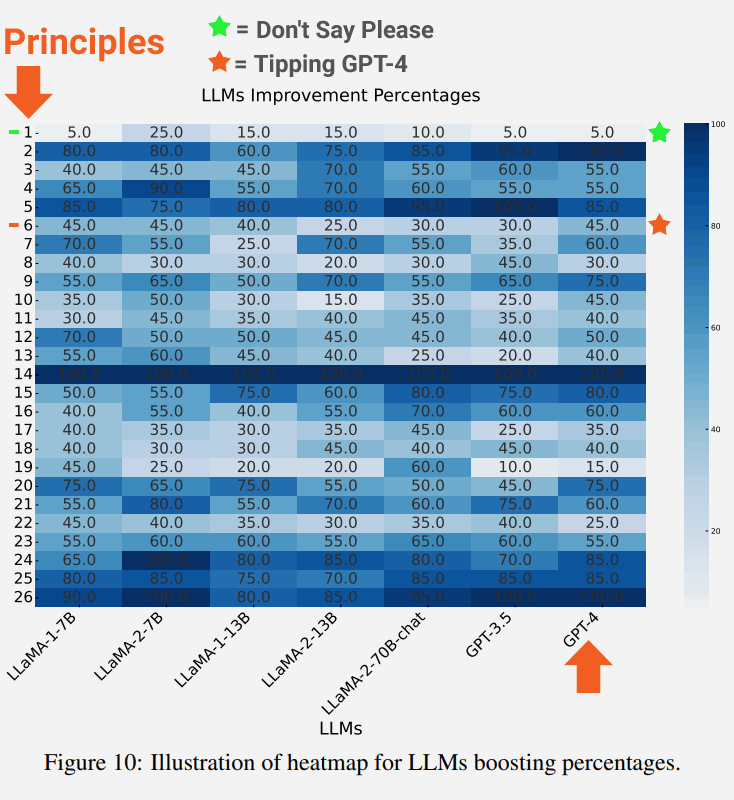

The following screenshot shows the degree of improvement of each language model for each principle.

Highlighted in the screenshot is principle 1 which emphasizes being direct, neutral and not saying words like please or thank you, which resulted in a 5% improvement.

Also highlighted are the results of Principle 6, which is the warning that includes the offer of a tip, which surprisingly resulted in a 45% improvement.

The description of neutral principle 1 states:

“If you prefer more concise answers, you don’t need to be polite with LLM, so there’s no need to add phrases like ‘please’, ‘if you don’t mind’, ‘thank you’, ‘I’d like to’ etc. . , and get straight to the point.”

The description of principle 6 requests:

“Add “I’ll tip $xxx for a better solution!””

Conclusions and future directions

The researchers concluded that the 26 principles were largely successful in helping the LLM focus on the important parts of the input context, which in turn improved the quality of responses. They referred to the effect as reformulation contexts:

Our empirical results show that this strategy can effectively reframe contexts that might otherwise compromise the quality of the result, thereby improving the relevance, brevity and objectivity of responses.”

Future areas of research noted in the study are to see if the basic models could be improved by tuning the language models with the principle prompts to improve the responses generated.

Read the research paper:

Principles instructions are all you need to question LLaMA-1/2, GPT-3.5/4

[ad_2]

Source link